14 Appendix: A Sample of Python Libraries

Python has hundreds of third-party libraries, specialized software packages that extend the functionality of Python. NLTK is one such library. To realize the full power of Python programming, you should become familiar with several other libraries. Most of these will need to be manually installed on your computer.

14.1 Matplotlib

Python has some libraries that are useful for visualizing language data. The Matplotlib package supports sophisticated plotting functions with a MATLAB-style interface, and is available from http://matplotlib.sourceforge.net/.

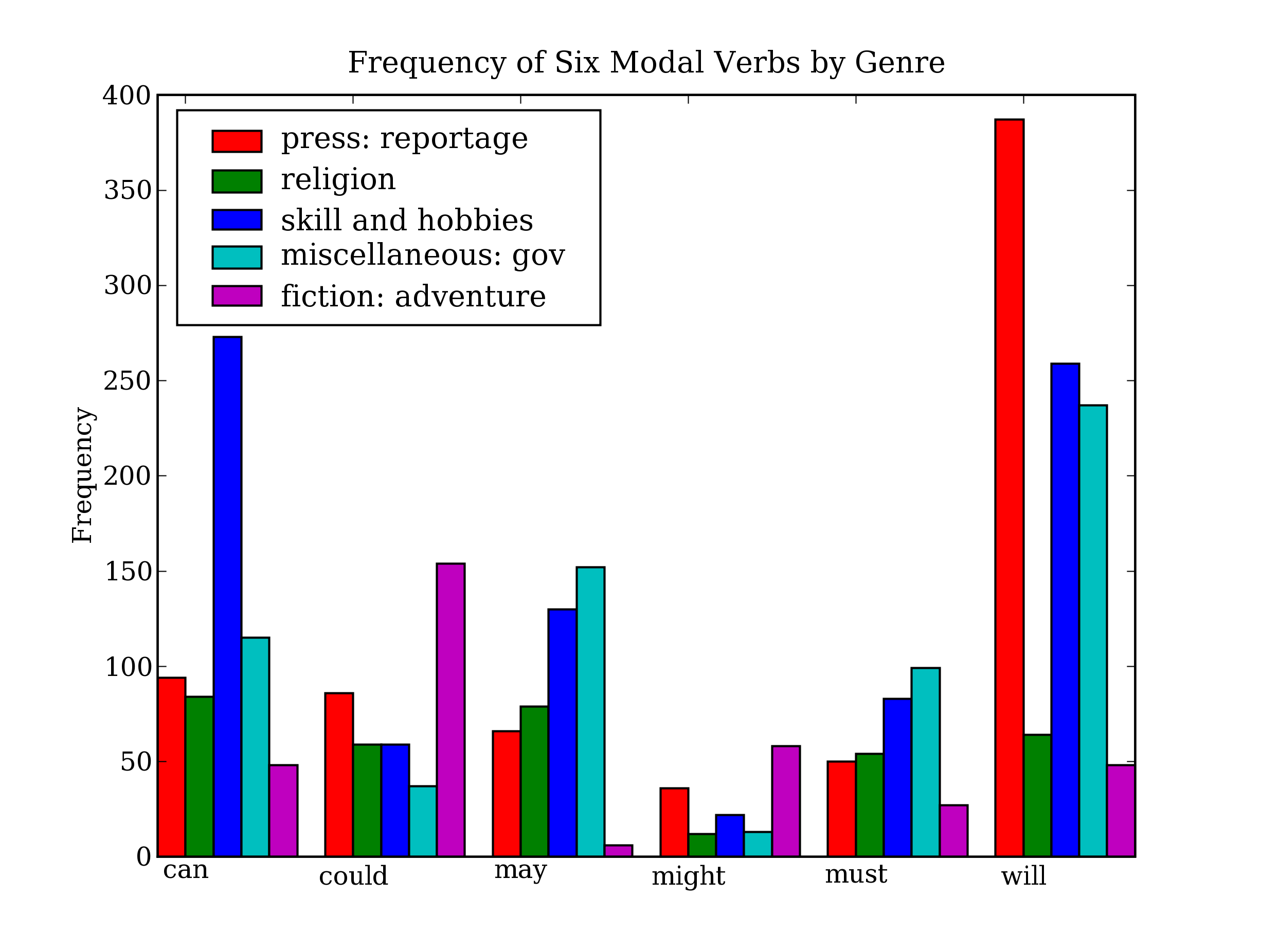

So far we have focused on textual presentation and the use of formatted print statements to get output lined up in columns. It is often very useful to display numerical data in graphical form, since this often makes it easier to detect patterns. For example, in 3.7 we saw a table of numbers showing the frequency of particular modal verbs in the Brown Corpus, classified by genre. The program in 14.1 presents the same information in graphical format. The output is shown in 14.2 (a color figure in the graphical display).

| ||

| ||

Example 14.1 (code_modal_plot.py): Figure 14.1: Frequency of Modals in Different Sections of the Brown Corpus |

Figure 14.2: Bar Chart Showing Frequency of Modals in Different Sections of Brown Corpus: this visualization was produced by the program in 14.1.

From the bar chart it is immediately obvious that may and must have almost identical relative frequencies. The same goes for could and might.

It is also possible to generate such data visualizations on the fly.

For example, a web page with form input could permit visitors to specify

search parameters, submit the form, and see a dynamically generated

visualization.

To do this we have to specify the Agg backend for matplotlib,

which is a library for producing raster (pixel) images ![[1]](callouts/callout1.gif) .

Next, we use all the same Matplotlib methods as before, but instead of displaying the

result on a graphical terminal using pyplot.show(), we save it to a file

using pyplot.savefig()

.

Next, we use all the same Matplotlib methods as before, but instead of displaying the

result on a graphical terminal using pyplot.show(), we save it to a file

using pyplot.savefig() ![[2]](callouts/callout2.gif) . We specify the filename

then print HTML markup that directs the web browser to load the file.

. We specify the filename

then print HTML markup that directs the web browser to load the file.

14.2 NetworkX

The NetworkX package is for defining and manipulating structures consisting of

nodes and edges, known as graphs. It is

available from https://networkx.lanl.gov/.

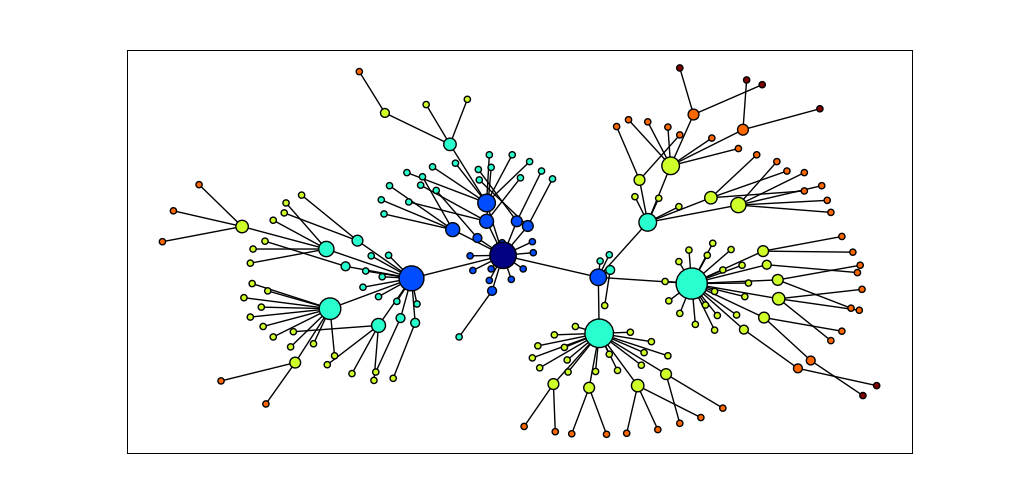

NetworkX can be used in conjunction with Matplotlib to

visualize networks, such as WordNet (the semantic network we

introduced in 4). The program in 14.3

initializes an empty graph ![[3]](callouts/callout3.gif) then traverses

the WordNet hypernym hierarchy adding edges to

the graph

then traverses

the WordNet hypernym hierarchy adding edges to

the graph ![[1]](callouts/callout1.gif) .

Notice that the traversal is recursive

.

Notice that the traversal is recursive ![[2]](callouts/callout2.gif) ,

applying the programming technique discussed in

sec-algorithm-design_. The resulting display is shown in 14.4.

,

applying the programming technique discussed in

sec-algorithm-design_. The resulting display is shown in 14.4.

| ||

Example 14.3 (code_networkx.py): Figure 14.3: Using the NetworkX and Matplotlib Libraries |

Figure 14.4: Visualization with NetworkX and Matplotlib: Part of the WordNet hypernym hierarchy is displayed, starting with dog.n.01 (the darkest node in the middle); node size is based on the number of children of the node, and color is based on the distance of the node from dog.n.01; this visualization was produced by the program in 14.3.

14.3 csv

Language analysis work often involves data tabulations, containing information about lexical items, or the participants in an empirical study, or the linguistic features extracted from a corpus. Here's a fragment of a simple lexicon, in CSV format:

We can use Python's CSV library to read and write files stored in this format.

For example, we can open a CSV file called lexicon.csv ![[1]](callouts/callout1.gif) and iterate over its rows

and iterate over its rows ![[2]](callouts/callout2.gif) :

:

Each row is just a list of strings. If any fields contain numerical data, they will appear as strings, and will have to be converted using int() or float().

14.4 NumPy

The NumPy package provides substantial support for numerical processing in Python. NumPy has a multi-dimensional array object, which is easy to initialize and access:

|

NumPy includes linear algebra functions. Here we perform singular value decomposition on a matrix, an operation used in latent semantic analysis to help identify implicit concepts in a document collection.

|

NLTK's clustering package nltk.cluster makes extensive use of NumPy arrays, and includes support for k-means clustering, Gaussian EM clustering, group average agglomerative clustering, and dendrogram plots. For details, type help(nltk.cluster).

14.5 Other Python Libraries

There are many other Python libraries, and you can search for them with the help of the Python Package Index http://pypi.python.org/. Many libraries provide an interface to external software, such as relational databases (e.g. mysql-python) and large document collections (e.g. PyLucene). Many other libraries give access to file formats such as PDF, MSWord, and XML (pypdf, pywin32, xml.etree), RSS feeds (e.g. feedparser), and electronic mail (e.g. imaplib, email).

About this document...

UPDATED FOR NLTK 3.0. This is a chapter from Natural Language Processing with Python, by Steven Bird, Ewan Klein and Edward Loper, Copyright © 2014 the authors. It is distributed with the Natural Language Toolkit [http://nltk.org/], Version 3.0, under the terms of the Creative Commons Attribution-Noncommercial-No Derivative Works 3.0 United States License [http://creativecommons.org/licenses/by-nc-nd/3.0/us/].

This document was built on Tue 23 Jun 2015 13:44:45 AEST